Mercury Model: Revolutionizing Language Generation with Diffusion-Based AI

In the ever-evolving landscape of artificial intelligence, a new generation of language models is emerging. The Mercury Model, developed by Inception Labs, is setting a bold new precedent by harnessing diffusion-based methods to generate text, but what does that mean? By rethinking the conventional autoregressive approach (left to right), Mercury promises faster, more efficient, and higher-quality outputs that could redefine AI applications across industries (generates it all at once and refines it over revisions).

Breaking the Autoregressive Mold

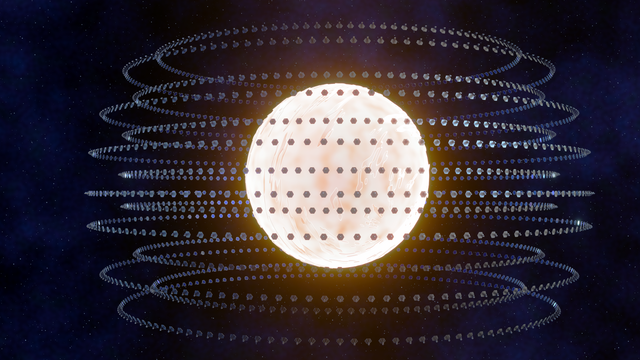

Traditional large language models (LLMs) generate text one token at a time. This autoregressive method, while powerful, inherently limits speed and incurs high inference costs, especially when complex reasoning is involved. Mercury’s breakthrough comes from adapting diffusion techniques—previously successful in image and audio generation—to the realm of text. Instead of sequentially predicting words, Mercury uses a coarse-to-fine generation process that starts with a rough, noisy approximation of the text and iteratively refines it through multiple denoising steps. This novel approach not only accelerates text generation but also enhances the model’s ability to self-correct errors and reduce hallucinations during output generation. How that works is with autoregressive methods, once it’s written by the AI, it can’t go back to change it, so one wrong word and it can throw off the whole “train of thought” for the model.

How Diffusion Works so Well with Mercury

Diffusion models have long dominated the field of generative visual arts, powering things such as DALL·E and Midjourney. Adapting these methods to language, however, has presented unique challenges due to the rigid structure of grammar and syntax. Mercury overcomes these hurdles by using a parallel refinement process. Rather than being trapped by sequential workings, Mercury refines blocks of text simultaneously, allowing for a global view of the sentence structure and context. This results in dramatically improved throughput—Mercury achieves over 1,000 tokens per second on commodity NVIDIA H100 GPUs, outpacing even the most speed-optimized autoregressive models by up to 10 times. And that’s just ONE card running the model, imagine 50!

Implications for the AI Ecosystem

The advancements brought by the Mercury Model have broad implications:

- Reduced Latency for Real-Time Applications: Faster generation speeds translate into smoother experiences in conversational AI, customer support, and interactive platforms.

- Cost-Effective AI Deployments: By lowering inference costs, Mercury enables enterprises to deploy more capable models without sacrificing performance or increasing overhead.

- Versatility Across Domains: Mercury’s design is adaptable to a range of applications—from natural language understanding to complex code synthesis—opening doors to innovative use cases in enterprise automation, research, and creative industries.

Industry experts believe that Mercury’s diffusion-based architecture could herald a paradigm shift. As diffusion models continue to mature, they might soon become the backbone for next-generation LLMs, offering unprecedented speed and quality across a spectrum of applications.

Looking Forward

The Mercury Model represents not just an incremental improvement but a transformative reimagining of language generation. With diffusion-based techniques enabling a leap forward in both speed and efficiency, Mercury is poised to set new standards in AI performance. As early adopters begin integrating Mercury into their workflows, we may soon witness a widespread industry shift—from traditional autoregressive methods to the dynamic, high-throughput world of diffusion-based LLMs.

In the coming years, as diffusion models refine and expand their capabilities, the landscape of AI-powered text generation is likely to undergo dramatic changes, with Mercury leading the charge into a future where speed, efficiency, and quality are no longer mutually exclusive.